Data Process - Preprocess

Introduction

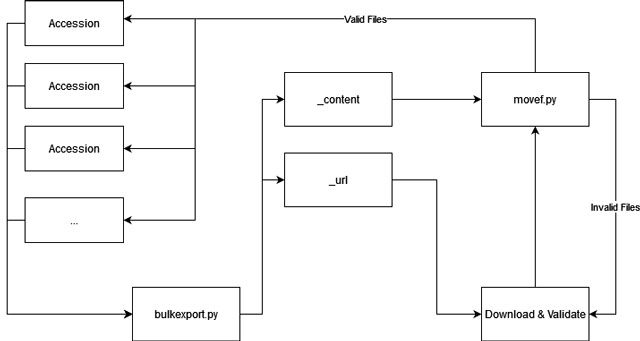

Downloading utility downloads files one by one, it does not automatically recognize the source accession of it. As we need to analyze the data in a unit of accession, I have to find a way to restore their original folder once downloaded.

The Idea

The idea is to download accessions in batch, i.e. 50 accessions per batch, and keep a record of the source accession for each file while extracting the file link. Once downloaded, the script will read the record and move the file back to its corresponding folder.

Structure

Data Process

Once a file is validated and moved to a buffer area, movef.py will move the data into its corresponding accession directory. Once all pending files are moved, the script will scan all the accessions, and compare the content with their _content file. If any of the accessions are in the complete state, that is, all required files were downloaded, this accession will be moved to the final directory, and waiting for analysis. This is currently a semi-automatic action, and the scan will need to be triggered manually. As we still need to manually replace the download list of each server, there is no need to fully automate it for now.