Computing Cluster Project

Project Concluded: September 25, 2020

DB Reference: dc_sra_comp_cluster

Last Updated: September 26, 2023

Part of the Genetic Analysis Project

Introduction

The purpose is to provide sufficient computing power for genetic analysis with ease of automatic management.

The computing cluster comprises several bare-metal servers equipped with high-performance Xeon CPUs, as well as a mix of virtual machines (VMs) used for miscellaneous purposes and dynamic requirements.

The servers are installed in the "Computing" Rack, which features dual-way power supply with a capacity of 8800W each. The servers are connected to each other via a high-speed 40G fiber LAN.

Specification & Structures

As of April 2023, the system has the following specification:

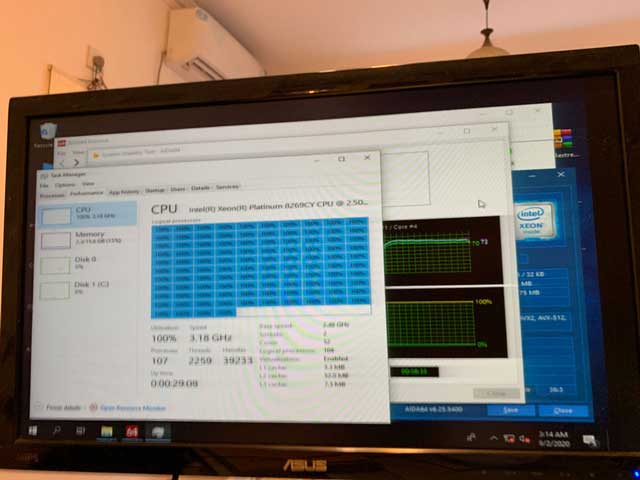

Hardware: 3 * DELL R740 each with dual Intel Xeon Platinum 8269CY & Hexa-Channel 192G DDR4 DRAM.

Hardware: 3 * DELL R630 each with dual Intel Xeon E5-2650v4(was E5-2678V3) & Quad-Channel 64G DDR4 DRAM.

Disk: All server has a RAID 0 virtual drive consist of 6 * 480G SSD

Host OS: Debian 10 & Windows Server 2019

Access Point(s): VPN or Proxy only

Detailed Description

The computing cluster is currently focused on providing computing power for the genetic analysis project. Debian 10 was installed on two R740 servers. The scripts running on these machines actively scan a specific location in the storage cluster to find pending jobs. Each machine can process up to 17 jobs simultaneously. Once a job is finished, its results are placed in designated folders on the storage backend based on it's status, and another job is then added to the processing pool. This simplifies the job scheduling process by avoiding the need to deploy a system like Slurm. However, it may be possible to deploy such a system in the future once the genetic analysis project is concluded.

The other R740 was configured as a hypervisor to provide high-performance virtuual machines in addition to X10QBL which is for general purpose VMs.

Most R630s were configured to have Windows Server 2019 as they needs to provide relatively high performance as well as flexibility of running different software, some of them requires a GUI.

Given the independent nature of each job, the computing cluster prioritizes disk performance, which is why RAID 0 was implemented. In the event of a disk failure and the subsequent removal of the array from service, the only potential loss is jobs that were being processed on that particular machine (up to 17). Since all data is stored on the storage cluster, recovering from this situation is relatively simple and only involves replacing the faulty disk and redeploying the analysis scripts.

To conclude, The Computing Cluster Project involves developing and deploying analysis scripts to estimate the necessary computing power, identifying the most cost-efficient hardware combinations, utilizing existing hardware, purchasing and installing parts, configuring RAID, installing OS and drivers, configuring the OS to support functions such as RDMA, and conducting stability tests.