Global Access Project

Project Concluded: November 01, 2022

DB Reference: dc_net_global_access

Last Updated: September 26, 2023

Introduction

By the end of 2022, almost a hundred services were hosted in my mini datacenter. It's challenging to provide an excellent service for global access, including myself. Additionally, exposing every service directly to the public internet is not a good practice.

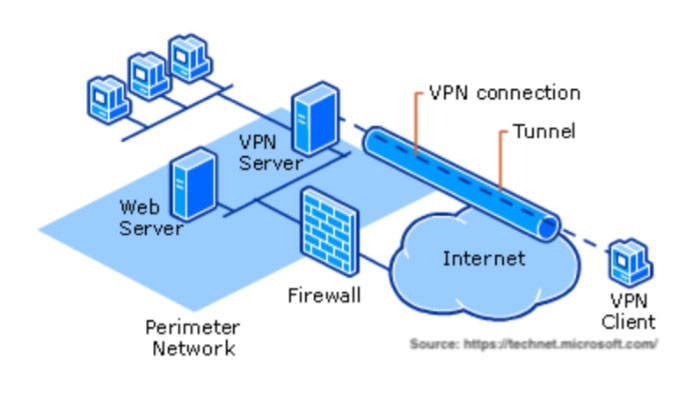

The Global Access Project was created to offer visitors a range of access points worldwide. Public services are now protected behind a reverse proxy or a suitable firewall, while a set of proxies was developed for internal-only services, integrating with the access points. For more information about the internal proxies, refers to Internal Proxies & VPN Project.

Specification & Structures

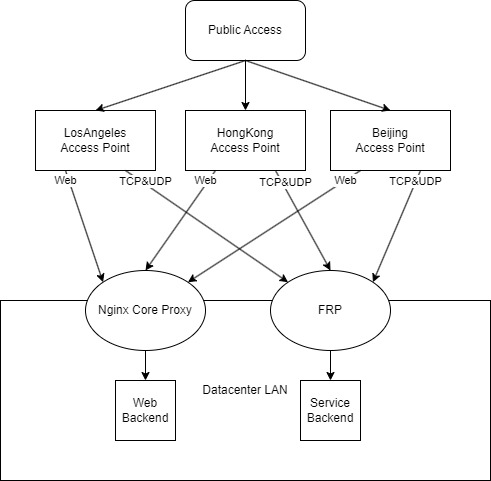

As of April 2023, the system has the following specification:

Hardware: 6 * 1 Core CPU & 1G Memory Virtual Server in 3 Locations.

Access Point(s): HongKong01, LosAngeles01, LasVegas01, Beijing01, Beijing02, Beijing03

Software: frp + Nginx + Bash + Socat

Backup & Redundent Nodes: HongKong02-04, LasVegas02-09, NewYork01

Detailed Description

In China, the quality of access varies depending on location. The aim is to automatically route visitors to their nearest access point with the best possible route to the data center. The project was split into three parts: IP assignment, access points & networks, and proxy software and strategy. The majority of the project is completed and now in use in the production environment. However, for some services, visitors from China must also access an overseas access point due to network censorship, which involves restrictions even if no illegal activity is going on.

Proxy Software & Strategy

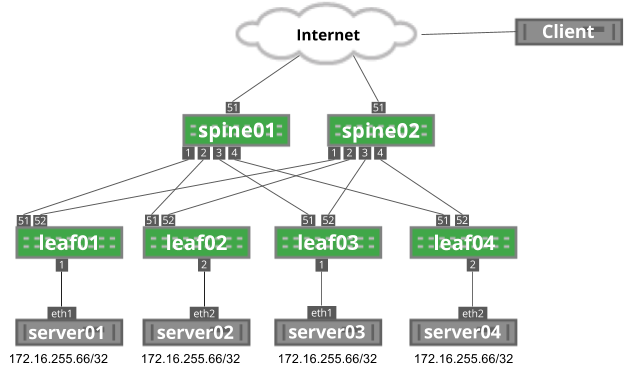

The services routed through the access points can be roughly categorized into three types: web servers that can be hidden behind a reverse proxy, general services that require either TCP or UDP port forwarding, and services that require both forwarding and the ability to obtain the source IP.

Nginx was deployed on every access point as a reverse proxy for web services. For services requiring port forwarding (such as Remote Desktop), the frp server was installed to establish a reliable, client-initiated proxy connection. This allows clients to activate services on any server without requiring changes to the server. The frp software was used in this case.

Both Nginx and frp offer a way for the client to obtain the real visitor IP, such as through the X-Forwarded-For Header. The client services behind the proxy were then configured to obtain this information. For services that don't support this feature, like Minecraft servers, the IP was obtained indirectly using the authentication server, which supports web proxy and can obtain the real IP.

Deployment

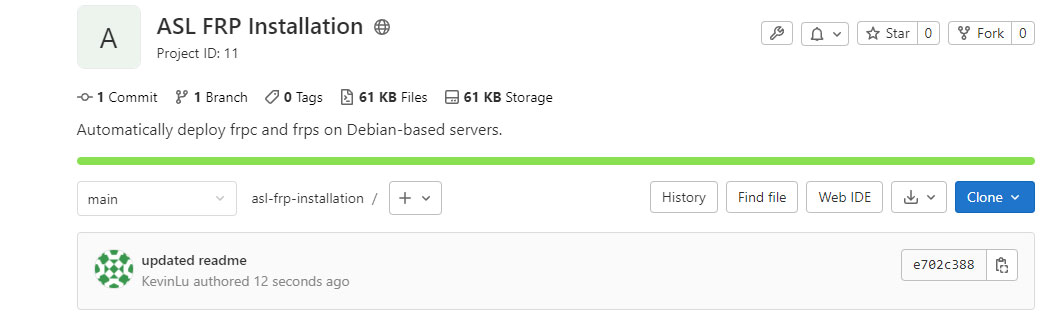

For Nginx, the routing policy can be configured at the core proxy server. However, for frp, I have to manually configure the frp client on each machine for every new service. To solve this issue, I developed a shell script to automatically download frpc, add it to the system service for auto start, and provide a prototype configuration. With each deployment, all I have to do is run a command, wait for it to finish, fill in the password and desired port and type. This greatly improves the efficiency of service deployment. The script can be found at here, but use it at your own risk as the content may change to meet certain goals in the future.

Network Routing

In China, there are three major ISPs: China Telecom, China Unicom, and China Mobile. Each of them has a different international routing policy for different destinations, data centers, and client locations in China. Additionally, major hosting providers like Tencent Cloud offer direct network access to all three peers, which can be used as a traffic relay. This makes it challenging to find the right access point with relatively better connections. Compounding this issue, the network quality can vary greatly at different times of the day, making testing even more complicated.

"Each one of these companies offers several tiers of IP transit ranging from inexpensive to very expensive. Each tier serves a specific purpose and comes with its own set of issues." - BandwagonHost

The aim is to identify hosting providers that offer adequate network bandwidth and traffic, as well as reliable network quality, that can complement my small datacenter.

This section can be divided into three sub-parts: identifying the appropriate network, selecting the appropriate hosting provider, and testing the quality of the connection.

After conducting some research, the following networks were identified as potential solutions.

- CN2 GIA (Global Internet Access): China Telecom's premium international access. While it's the best choice with many users, there may be potential congestion during peak hours.

- AS9929: China Unicom's premium international access. There are few providers, and it's usually a single-way transit. There aren't many reviews available for reference.

- AS4837 (San Jose): China Unicom's high-end international access. While it may not be comparable to AS9929, it offers a more accessible solution and may potentially provide better service during peak hours.

- CMI: China Mobile's international direct access. It's the best option for China Mobile users if not routed through CN2, but it's not what I need.

- IPLC/IEPL: International private lines that offer no censorship and the best possible route. However, they are ridiculously expensive, and there are no reliable providers at the moment, so they will be skipped for now.

It's difficult to determine which option should be the final choice, as each one has its own pros and cons. As there are a number of reviews for CN2 GIA, the decision was made to start with CN2 GIA. It's worth noting that the access route depends solely on the hosting provider, and it's possible for a provider to route China Unicom and China Mobile users through China Telecom's CN2 network, as it could provide a better network for visitors from different ISPs.

For the US access point, I have tried two CN2 GIA providers dmit and BandwagonHost(which also route through dmit for now). Both providers passed the stability testing smoothly. While it's important to note that my testing was neither professional nor official. It did provide the desired outcome, and I didn't notice any lag in any service during most times of the day. Ultimately, I decided to go with BandwagonHost due to its flexibility of changing hosting locations and brand reputation.

For the Asia-Pacific region, Hong Kong is the best location. I went through a similar process and ultimately chose to host the Hong Kong access point with Tencent Cloud.

For each geographical location, at least one redundant node was deployed. These nodes also have other tasks in addition to serving as redundancies.

Web Reverse Proxies & Load Balancer

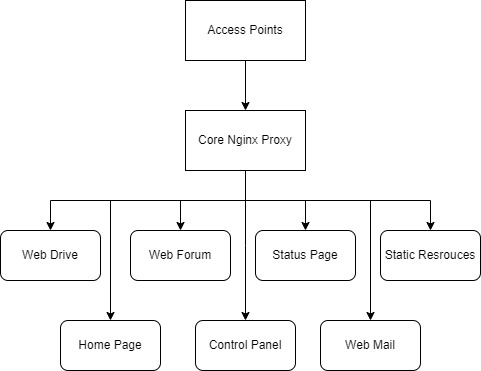

Due to the nature of frp, no additional server changes need to be made each time a client initiates a new proxy connection. However, for Nginx, it's a different story.

There are two ways to configure Nginx proxy. The first method is to synchronize the configuration for each access point every time a new webpage is added, which requires a tool to synchronize and apply configurations. The second method is to have each access point forward all traffic to another reverse proxy located in the datacenter, where the detailed routing policy is applied. This reduces the scope of modifications required each time a change is made. I chose the latter option. The downside is that if the core proxy goes offline, all hosted websites will also be offline. I'm still considering a safety measure for this scenario. For now, the reliability of Nginx is sufficient for my non-mission-critical websites.

Status

For status of access points, please refers to Services & Status

Future Work

SmartDNS

Currently, the SmartDNS feature has not been implemented, so visitors have to manually select the access node they want within the embedded configuration. However, I plan to implement this feature in the upcoming project. Smart DNS Project.

AnyCast

In addition to SmartDNS, anycast is also being considered. For more information: Anycast Access Project.

frp Proxy

Although I haven't had any luck yet, frp does provide a client IP field in its configuration, which means it's possible to have a central frp proxy client in the LAN and forward traffic to other services. This eliminates the need to deploy frp on every VM and is worth considering. However, there is a major downside: all active connections will be disconnected if I add or remove an existing frp connection since a service restart is required. Therefore, whether or not this will turn into a project is still uncertain.